Amazon Bedrock

Amazon Bedrock is a fully managed service that provides access to powerful foundation models (FMs) from leading AI providers like Anthropic, Meta, and Amazon. It allows developers to easily build and scale generative AI applications without managing infrastructure.

Amazon Bedrock now supports Extended Thinking capabilities for Anthropic Claude models with step-by-step transparency!

Key Features:

- ✅ Enable Extended Thinking: Enhance reasoning for complex tasks with transparent thought processes

- 🎯 Thinking Budget Tokens: Configure the maximum tokens for Claude's internal reasoning

- 🔍 Step-by-step Transparency: See how Claude approaches complex problems

- ⚠️ Cost Impact: Extended thinking consumes additional tokens, increasing usage costs

- 📋 Availability: Available for Claude 3.7 Sonnet and newer versions

For enabling models in different AWS regions,refer this cross region inference page

Getting started with Amazon Bedrock

You can choose your model based on your requirements; the following steps only show how to configure Anthropic in Bedrock.

Step 1: Set Up Your AWS Account

Pre-requisites

- Active AWS account.

- Necessary permissions: Ensure that your AWS user or role has the necessary permissions to access and use Amazon Bedrock.

Step 2: Access Amazon Bedrock

Via AWS Console

- Go to the AWS Management Console.

- Search for Amazon Bedrock in the search bar.

- Open Amazon Bedrock service page.

Step 3: Model Access

The Model access page in Amazon Bedrock has been retired. Serverless foundation models are now automatically enabled across all AWS commercial regions when first invoked in your account — you no longer need to manually activate model access.

For models served from AWS Marketplace, a user with AWS Marketplace permissions must invoke the model once to enable it account-wide for all users. First-time users of Anthropic models may need to submit use case details before they can access the model.

Choosing an Authentication Type

When configuring a Bedrock LLM or Embedding adapter in Unstract, the Authentication Type dropdown offers three options. Pick the one that matches how your Unstract deployment is hosted:

| Option | When to use | What you supply |

|---|---|---|

| Access Keys | Any deployment (cloud or on-prem) where you have a long-lived AWS Access Key ID and Secret. | AWS Access Key ID, AWS Secret Access Key, Region. |

| IAM Role / Instance Profile (on-prem AWS only) | Unstract is hosted on AWS infrastructure with ambient credentials — EKS pods using IRSA, ECS tasks with a task role, or EC2 instances with an instance profile. | Region only. boto3 resolves credentials from the host's identity. Requires Unstract v0.159.3 or later — see the AWS IRSA Setup guide. |

| Bedrock API Key (Bearer Token) | You want to authenticate with an AWS Bedrock API key (AWS_BEARER_TOKEN_BEDROCK) instead of provisioning an IAM user — a simpler credential to issue and rotate. Long-term keys only — short-term keys are not supported (Unstract does not refresh the token). | Bedrock API Key (long-term), Region. |

Bedrock API keys are issued per region. The AWS Region you select in Unstract must match the region the key was issued for, otherwise AWS will reject the request.

The three sections below cover how to obtain credentials for each option. Pick the section that applies to you and skip the others.

Option A — AWS Access Keys

Step 1: Sign In to the AWS Management Console

- Go to the AWS Management Console.

- Log in using your AWS root account or an IAM user with sufficient permissions.

Step 2: Create an IAM User with Programmatic Access

-

Navigate to IAM:

- In the AWS Management Console, search for IAM (Identity and Access Management).

- Click on IAM to open the IAM dashboard.

-

Create New User:

- In the left sidebar, click on Users.

- Click the Add user button.

-

Set Permissions:

- In the User details step, provide a User name.

- Under Access type, select Programmatic access to allow access through the AWS CLI, SDKs, or APIs.

-

Assign Permissions:

-

In the Set permissions step, you have two options:

- Attach policies directly: Search for and attach policies that grant the necessary permissions to use Amazon Bedrock.

Basic policy for on-demand models (e.g., Claude 3 Haiku):

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"bedrock:InvokeModel",

"bedrock:InvokeModelWithResponseStream"

],

"Resource": [

"arn:aws:bedrock:us-east-1::foundation-model/anthropic.claude-3-haiku-20240307-v1:0"

]

}

]

}Policy for newer models requiring inference profiles (e.g., Claude Haiku 4.5, Claude Sonnet 4.6):

Newer Anthropic models (Claude Haiku 4.5, Claude Sonnet 4.6, etc.) do not support on-demand invocation. They must be accessed through a cross-region inference profile (e.g.,

us.anthropic.claude-haiku-4-5-20251001-v1:0). The IAM policy must include both the foundation model ARN and the inference profile ARN:{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"bedrock:InvokeModel",

"bedrock:InvokeModelWithResponseStream"

],

"Resource": [

"arn:aws:bedrock:*::foundation-model/anthropic.claude-haiku-4-5-20251001-v1:0",

"arn:aws:bedrock:*:<ACCOUNT_ID>:inference-profile/us.anthropic.claude-haiku-4-5-20251001-v1:0"

]

},

{

"Effect": "Allow",

"Action": [

"aws-marketplace:ViewSubscriptions",

"aws-marketplace:Subscribe"

],

"Resource": "*"

}

]

}Important Notes- Replace

<ACCOUNT_ID>with your AWS account ID. - The foundation model resource uses a wildcard region (

*) because the cross-region inference profile may route requests to any US region. - The inference profile resource also needs a wildcard region (

*) for the same reason. - The

aws-marketplaceactions requireResource: "*"— they do not support resource-level restrictions. These permissions are needed for first-time Anthropic model access and can be removed afterward.

For more information, see Amazon Bedrock IAM policies.

- Add user to group: If you have an existing group with appropriate permissions, you can select that group.

- Attach customer managed policies: For fine-grained control, create a custom policy (such as restricting access only to Bedrock).

-

Once selected, click Next.

-

Step 3: Review and Create User

-

Review User Details:

- Verify the permissions and access settings.

-

Create User:

- Click Create user.

- After creation, AWS will display a success message with the Access Key ID and Secret Access Key.

Important: Copy the Secret Access Key immediately, as you won't be able to view it again.

Step 4: Store the Access Keys Securely

- Store the Access Key ID and Secret Access Key in a secure location (for example, AWS Secrets Manager, environment variables, or an encrypted file).

- You will use these keys to connect to Unstract.

Option B — IAM Role / Instance Profile

This option is only valid when Unstract itself is hosted on AWS infrastructure that already has an identity attached — for example, EKS pods bound to an IAM role via IRSA, ECS tasks with a task role, or EC2 instances with an instance profile.

No credentials are entered in the Unstract UI. boto3 picks up the host's identity from the standard AWS credential chain (IRSA web identity token → STS-issued temporary credentials, instance metadata, etc.) and Bedrock requests are signed automatically.

For the end-to-end EKS setup — OIDC provider, trust policy, IAM role, and service account annotation — follow the AWS IRSA Setup guide. Make sure the IAM role attached to the pod has the same Bedrock permissions listed in Option A, Step 2.

The IAM Role / Instance Profile option requires Unstract v0.159.3 or later.

Option C — Bedrock API Key (Bearer Token)

AWS issues Bedrock API keys that authenticate via a single Authorization: Bearer <token> header — no IAM user, no SigV4 signing. This is the AWS-recommended path for workflows that need a simpler credential than long-lived access keys.

The Bedrock API Key (Bearer Token) option is available from the next on-prem release after v0.162.0 (and on Unstract Cloud once the same SDK version rolls out).

Unstract stores the Bedrock API key as static adapter metadata and does not auto-refresh it. The bearer token must therefore be a long-term API key.

Short-term API keys are not supported — they expire when the AWS console session that minted them ends (12 hours by default), after which every Bedrock call from Unstract will fail with a 401. Adapters configured with a short-term key will silently stop working once the key expires.

Step 1: Generate a long-term Bedrock API Key

- Sign in to the AWS Management Console and open Amazon Bedrock.

- Switch to the AWS Region you intend to call Bedrock from — API keys are region-scoped, so the key must be issued in the same region you will configure in Unstract.

- From the Bedrock left sidebar, open the API keys page.

- Open the Long-term API keys tab and click Generate long-term API keys.

- Set an expiry that fits your rotation policy and confirm.

- Copy the displayed key. AWS shows the secret value only once.

For more details, see the AWS guide on Generating Amazon Bedrock API keys.

Step 2: Ensure the underlying IAM identity has Bedrock permissions

A Bedrock API key inherits the permissions of the IAM user or role that created it. The IAM identity needs at minimum bedrock:InvokeModel (and bedrock:InvokeModelWithResponseStream for streaming) on the models you plan to use — see the policy examples in Option A, Step 2.

Step 3: Store the API Key Securely

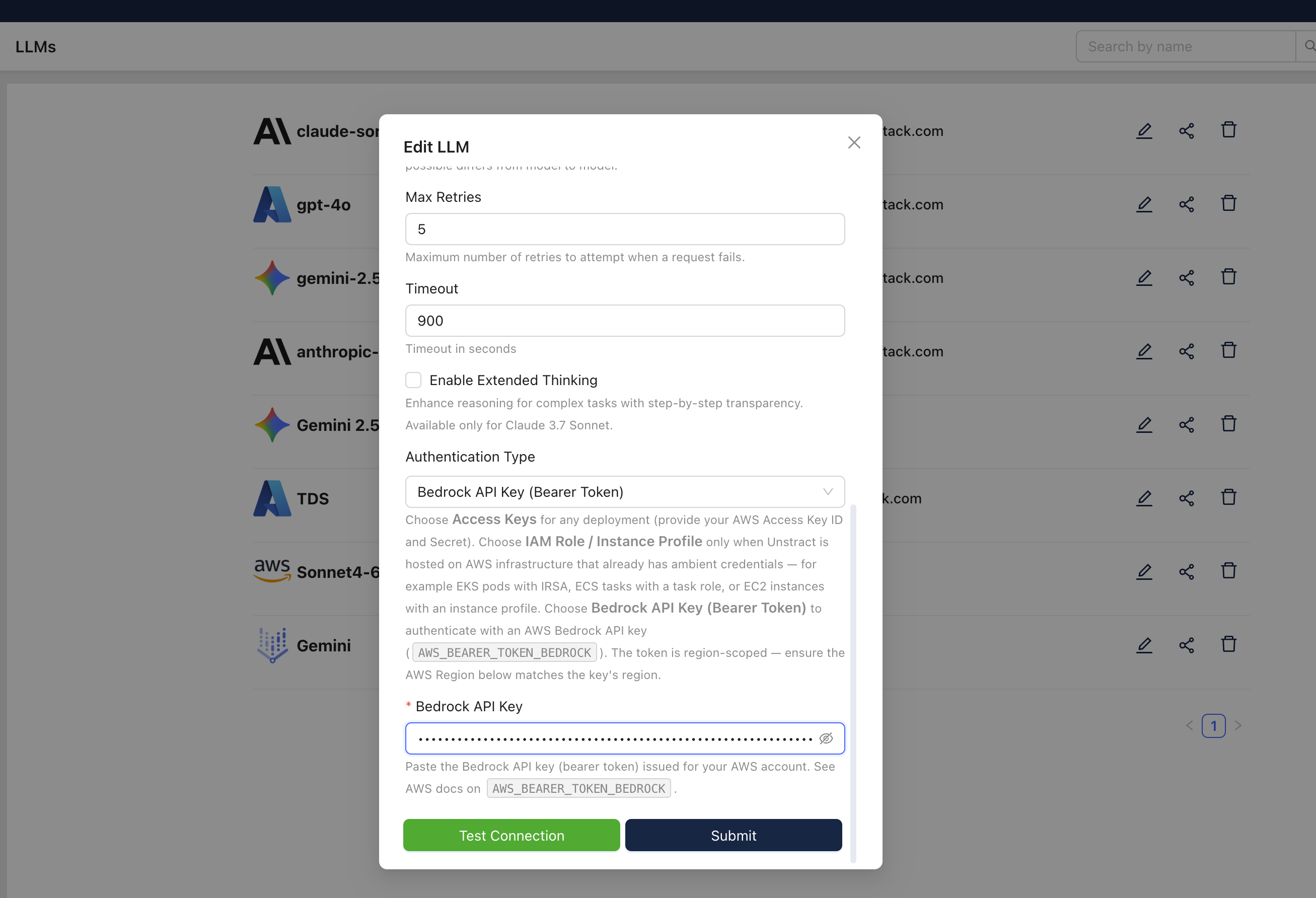

Treat the Bedrock API key like any other long-form secret: store it in AWS Secrets Manager, a CI secret store, or your password manager. You will paste it into the Unstract UI in the next section — in the Bedrock API Key field shown below:

Setting up the Anthropic LLM model in Unstract

Now that we have an model deployed and the required keys, we can use it to set up an LLM profile on the Unstract platform. For this:

- Sign in to the Unstract Platform

- From the side navigation menu, choose

Settings🞂LLMs - Click on the

New LLM Profilebutton. - From the list of LLMs, choose

Bedrock. You should see a dialog box where you enter details.

If you're using Anthropic Claude 3.7 Sonnet through Bedrock, you'll have additional options:

Enable Extended Thinking

- ☑️ Check this option to activate enhanced reasoning with step-by-step transparency

- Available for Claude 3.7 Sonnet and newer versions

Thinking Budget Tokens

- 🎯 Token Budget: Set the maximum tokens for Claude's internal reasoning

- 💡 Recommendation: Start with 5000 tokens for most use cases

- 📈 Higher Budget: More tokens = more detailed reasoning but increased costs

⚠️ Important: Extended thinking consumes additional tokens, which will increase your AWS usage costs

- For

Name, enter a name for this connector. - In the

Model Nameenter the model which you deployed. - For

AWS Region, enter the region your model (or Bedrock API key) is provisioned in — e.g.,us-east-1. - For

Authentication Type, pick the option that matches your setup:- Access Keys — paste the AWS Access Key ID and AWS Secret Access Key generated in Option A.

- IAM Role / Instance Profile (on-prem AWS only) — leave the key fields empty; the host's IAM identity is used automatically. See Option B.

- Bedrock API Key (Bearer Token) — paste the key generated in Option C into the Bedrock API Key field. Make sure the AWS Region above matches the key's region.

- Leave the

Max retriesandTimeoutfields to their default values. - For the

Maximum Output Tokens, enter the maximum token limit supported by the model, or leave it empty to use the default maximum. - For Claude 3.7 Sonnet and newer: Enable extended thinking and set your preferred thinking budget tokens

- Click on

Test Connectionand ensure it succeeds. You can finally click onSubmitand that should create a new LLM Profile for use in your Unstract projects.

The same three Authentication Type options are available for the Amazon Bedrock embedding adapter (under Settings 🞂 Embeddings). The fields and behaviour are identical — only the model list differs.

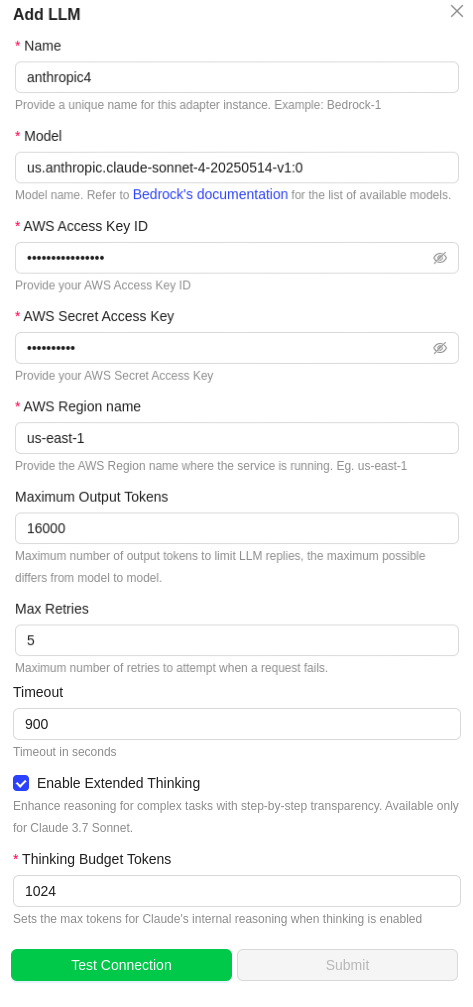

Using Cross-Region Inference Models

Amazon Bedrock supports cross-region inference, which allows you to access foundation models across different AWS regions for improved availability and performance. With Unstract's Bedrock adapter, you can easily use cross-region inference models.

Understanding Inference Profiles

Newer Anthropic models on Bedrock (Claude Haiku 4.5, Claude Sonnet 4.6, and newer) require cross-region inference profiles. They cannot be invoked directly using the foundation model ID.

- On-demand models (e.g.,

anthropic.claude-3-haiku-20240307-v1:0): Can be invoked directly with the model ID. - Inference-profile-only models (e.g.,

anthropic.claude-haiku-4-5-20251001-v1:0): Must use the inference profile ID with theus.prefix (e.g.,us.anthropic.claude-haiku-4-5-20251001-v1:0).

If you use a model ID without the us. prefix for these newer models, you will receive the error:

"Invocation of model ID ... with on-demand throughput isn't supported. Retry your request with the ID or ARN of an inference profile that contains this model."

Configuring Cross-Region Inference in Unstract

-

Access the Cross-Region Inference Console:

- In the Amazon Bedrock console, navigate to the Cross-region inference section.

- Here you'll find the available models with their corresponding Model IDs and Model ARNs.

-

Configure in Unstract:

- When setting up your LLM profile in Unstract, use the inference profile Model ID (e.g.,

us.anthropic.claude-haiku-4-5-20251001-v1:0) in theModel Namefield.

- When setting up your LLM profile in Unstract, use the inference profile Model ID (e.g.,

-

Ensure IAM Permissions:

- Your IAM policy must include both the foundation model and inference profile resource ARNs with wildcard regions (see IAM policy example above).

For more detailed information about cross-region inference, refer to the AWS Bedrock Cross-Region Inference documentation.

Note: All other configuration steps remain the same - you only need to replace the model identifier in the Model Name field.

APAC Region Costing: Costing information for models in the APAC region are currently unavailable. This is expected to be fixed in the future.